In the computer graphics (CG) animated comedy "Ted," which is running now in the cinemas, Ted is a teddy bear who came to life as the result of a childhood wish of John Bennett (Mark Wahlberg) and has refused to leave his side ever since. CG Animated characters like "Ted" have become a standard of Hollywood's movie productions since the blockbuster "Avatar" with its blue-skinned computer-animated characters won three Oscars and brought in three billion US dollars, digital animated characters have become a standard of Hollywood's movie productions.

While movies like "Pirates of the Caribbean" or "Ted" still combined real actors with digital counterparts, the well-known director Steven Spielberg focused entirely on virtual actors in "The Adventures of Tintin." He used the so-called motion capture approach, which also animated Ted. Motion capture means that an actor wears a suit with special markers attached. These reflect infrared light sent and received by a camera system installed in a studio. In this way, the system captures the movements of the actor. Specialists use this as input to transfer exactly the same movements to the virtual character.

"The real actors dislike wearing these suits, as they constrain their movements," explains Christian Theobalt, professor of computer science at Saarland University and head of the research group "Graphics, Vision & Video" at the Max-Planck-Institute for Informatics (MPI). Theobalt points out that this has not changed since animating "Gollum" in the trilogy "Lord of the Rings." Hence, together with his MPI-colleagues Nils Hasler, Carsten Stoll and Jürgen Gall of the Swiss Federal Institute of Technology Zurich, Theobalt developed a new approach that both works without markers and captures motions in realtime. "The part which is scientifically new is the way in which we represent and compute the filmed scene. It enables new speed in capturing and visualizing the movements with normal video cameras," Theobalt explains.

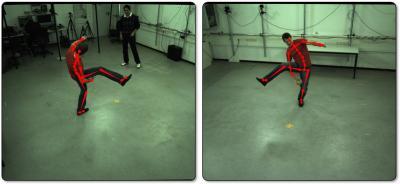

Implemented, it looks like this: The video cameras record a researcher turning cartwheels. The computer gets the camera footage as input and computes the skeleton motion of the actor so quickly that you cannot perceive any delay between the movement and its overlay, a red skeleton. According to Theobalt, the new computing approach also works if the movements of several persons have to be captured, or if they are obscured by objects in the studio and against a noisy background.

Computer scientists at the Max-Planck-Institute for Informatics in Saarbrücken can compute the skeleton motion of the actor in realtime.

(Photo Credit: Picture: MPI)

"Therefore we are convinced that our approach even enables motion capture outdoors, for example in the Olympic stadium," Theobalt points out. Athletes could use it to run faster, to jump higher or to throw the spear farther. Spectators in the stadium or in front of the TV could use the technology to tell the difference between first and second place. Besides entertainment, medical science could also benefit from the new approach, for example by helping doctors to check healing after operations on joints.

In the next months his MPI colleagues Nils Hasler and Carsten Stoll will found a company to transform the software prototype into a real product. "They've already had some meetings with representatives sent by companies in Hollywood," Theobalt says.

Technical background

The new approach requires technology which is quite cheap. You need no special cameras, but their recording has to be synchronized. According to the MPI researcher, five cameras are enough that the approach works. But they used twelve cameras for the published results. The way they present the scene to the computer and let it compute makes the difference. Hence, they built a three-dimensional model of the actor whose motions should be captured. The result is a motion skeleton with 58 joints. They model the proportions of the body as so-called sums of three dimensional Gaussians, whose visualisation looks like a ball. The radius of the ball varies according to the dimensions of the real person. The resulting three-dimensional model resembles the mascot of a famous tire manufacturer.

The images of the video cameras are presented as two-dimensional Gaussians that cover image blobs that are consistent in color.To capture the person's movement, the software continuously computes the best way that the 2D and 3D Gaussians can overlay each other while fitting accurately. The Saarbrücken computer scientists are able to compute these model-to-image similarities in a very efficient way. Therefore, they can capture the filmed motion and visualize it in real-time. All they need is just a few cameras, some computing power and mathematics.

Source: Saarland University